I've got a couple of these scanners (4870 and 3200) which I use for scanning 4x5 film and more recently 6x9 and 6x12 120 film. While they're quite good up to (somewhere around) 2400 dpi, I don't think there would be many people argue that they go beyond this. The wise (in my opinion) are content with 2400 or less.

An area which is hotly contested is the benefits of adjustment the height of the film holders to obtain better focus, of course I've tried that too. I had performed some careful measurements and found that a small raise in my film holder position improved my focus. Importantly (we'll see why in a sec) I

mainly use black and white 4x5 negative, with the occasional use of colour.

It was when scanning a 4x5 colour slide recently that I made an interesting discovery and I've come to the (more or less final) conclusion that while there may be gains to be had in focus,

but that there will be other problems introduced by this.

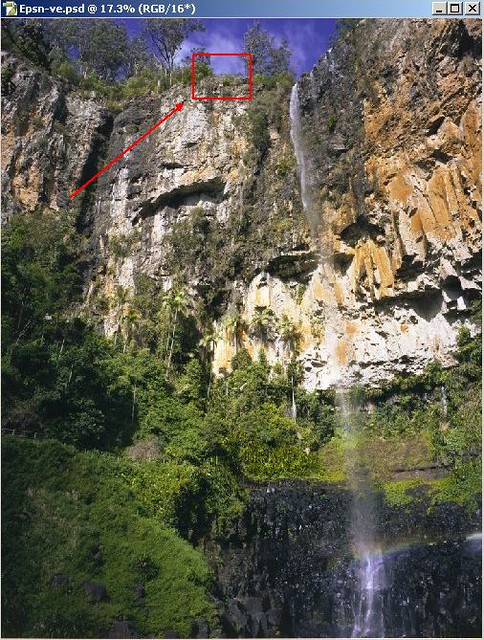

Firstly I discovered this issue by observing a scan of this image which I took earlier this year in the beginning of spring. Its taken with my 4x5 camera, with a coated Fujinon 90mm f8 lens. The exposure was made on Fuji Provia RDP-III.

Looking around the image I noticed that there was some strong chromatic aberration appearing on the scans when magnified as you can see to the left here. This is what I was seeing when magnifying a 1200dpi scan (it requires less magnification when scanning at 1600, 2400 or 3200). Certainly this was sticking out like "prawns eyes" because of the high contrast background of snow.

My first thought was "

shit, is my lens this bad?"

So I whipped out my x10 loupe and couldn't see a trace of it on the film. I split the channels to see what was there for each of the R G and B bits. And well I found something I was not expecting.

There is distinct spatial movement between the pixels for

R G and

B!

Dam!

Dam!Above is an animated GIF of each of the colour channels separated. If you wish to look at one of your own images more easily, try cycling through the colour channels (in Photoshop for instance, just press Ctl and cycle through the numbers 1 2 3 on your keyboard).

Looking around the scan I found also, that the effect was not evenly distributed, with left side migrating left to the outside, the center seems to just grow mildly around the axis, and with the right side moving to the right.

Left Center

Center Right

Right What is causing this?

What is causing this?

Well, really I don't know, but my working theory is that the difficulty is created by two physical aspects or the scanner;

- the scanner head is actually not exactly the same size as the image that is scanned, meaning that light from the film travels at different angles to the sensor (see figure to left)

- the sensor is a evenly spaced repeating array of RGB points.

The scanner has in fact an array of 3 distinct sensors placed in separate (although adjacent) locations a

red green and

blue sensor. So, the data from

these adjacent physical positions must then be mapped in

single physical space to create a

single RGB pixel. This is like the demosiacing that is done on bayer arrays on digital cameras.

Now the next part of the equation comes from the fact that light from the light source is not parallel to the scan head. This creates a complex problem for remapping these as pixels because if you alter the distance between the film and the sensor, the angle will alter at the edges. So while you might be able to perhaps improve the focus you will alter the physical angles, and thus the physical locations of where

R G and

B are.

I'd have thought that Epson would have accounted for this in their spatial remapping, but they must have decided that the calculation was too complex. I scanned the following to demonstrate just how much angle we're talking about here. Notice how the inside of these knobs (three identical 3-dimensional objects) shows that the scanner is viewing them from the side on the edge of the scan area, and directly below in the center.

Normally you'd not see this when scanning a single dimensional (flat) object like a piece of paper. I think its pretty obvious here that the angle of view changes at varying angles as the distance from the center is increased,

not to mention that the effect is different in different locations NB its not uniform.

Meaning that its not easy to just fix by nudging pixels, because if you then need to nudge left side differently to right side, and alter the amount as you move outward in the image. Worse, if you then

change the height of the object from the standard location (where prehaps the design is intended to converge the points) to any 'focus sweet spot' then you'll be increasing your aberration effects.

I actually have made adjustment on my focus with stand-off's attached under the film holders to raise it. I tried removing them and found that focus was worse generally, but the above

RGB effect was worse in some areas, while better in other areas ... you win some you loose some.

Lucky for me

none of this applies when only using black and white. I've found that putting the scanner into black and white mode uses only the green layer, so no remapping of

RGB pixels locations is not needed. So this scanner makes a nice well priced black and white film scanner!

But in terms of

post construction adjustments to attempt to make this scanner better it seems to me that each individual case needs to be examined carefully for focus AND this

RGB effect. Perhaps we're stuck with the system as it is for colour and that altering film height for finding focus sweet spot remains mainly a benefit for black and white film.

Anyway, I would love to hear from owners of later models (like the V750) to see if this issues remains there too!

:-)

My theory is that the location of the film plane is a combination of two separate functions. One is of course focus, the other is to do with the physical location of the sensors.

My theory is that the location of the film plane is a combination of two separate functions. One is of course focus, the other is to do with the physical location of the sensors.

Note how clear the dark areas are around the bushes ... apart from what appears up in the sky (where there is only blue signal) there is almost no noise at all. This was also an 8 second night time exposure, with the camera pushed to its highest ISO (320 in this case).

Note how clear the dark areas are around the bushes ... apart from what appears up in the sky (where there is only blue signal) there is almost no noise at all. This was also an 8 second night time exposure, with the camera pushed to its highest ISO (320 in this case). Yet this cameras image sucks, obvious peppery looking grain everywhere, and colour blotchieness. Despite it being a much shorter exposure time and using a much lower sensitivity of 100ISO.

Yet this cameras image sucks, obvious peppery looking grain everywhere, and colour blotchieness. Despite it being a much shorter exposure time and using a much lower sensitivity of 100ISO.